|

I am a PhD candidate at the Department of Electrical and Computer Engineering at UC, Riverside, where I research in making Trustworthy AI. I am fortunate to be advised by Professor M. Salman Asif. Prior to joining UCR, I received my Bachelor's degree from Huazhong University of Science and Technology in 2022.

CV /

Scholar /

GitHub /

Hugging Face /

Lab |

|

|

Jul. 2025: Presenting our work SLUG at the ICML 2025 (Vancouver, BC). Dec. 2024: Presenting our work SLUG at SafeGenAi Workshop, NeurIPS 2024 (Vancouver, BC). Jun. 2023: Presenting EBAD , a joint work with Zikui Cai at the CVPR 2023 (Vancouver, BC). |

|

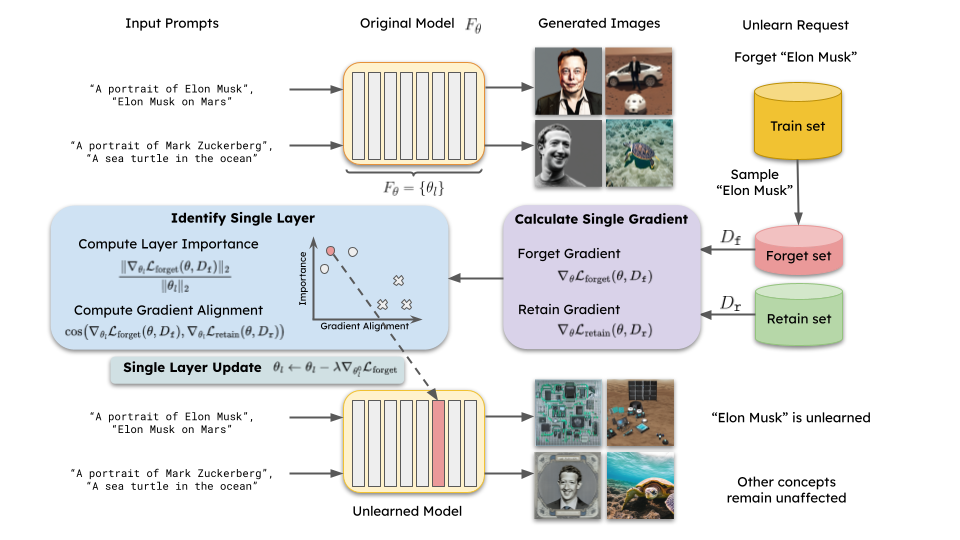

Zikui Cai*, Yaoteng Tan*, M. Salman Asif ICML, 2025 arXiv / code / project page TL;DR: We propose a way to remove "concepts" from vision-language foundationmodels via a single layer single-step update, which is highly efficient and can be applied to large models. Our method can be used for various applications, such as removing harmful biases from models, correcting model errors, and protecting data privacy. |

|

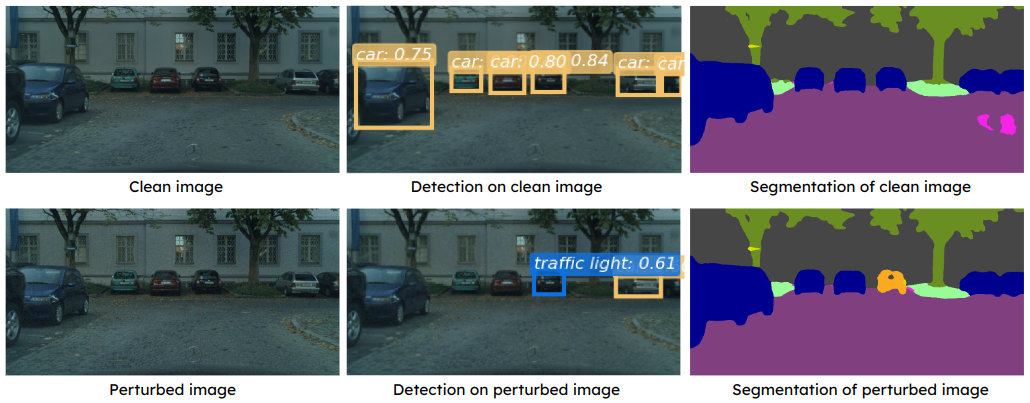

Zikui Cai*, Yaoteng Tan*, M. Salman Asif (* Equal contribution) CVPR, 2023 arXiv / open access / code / poster TL;DR: We propose a query-efficient approach for blackbox attacks against computer vision models. Spotlight: our proposed method can generate a single perturbation that can fool multiple blackbox detection and segmentation models simultaneously, demonstrate generalizability across different tasks. |

|

|

|

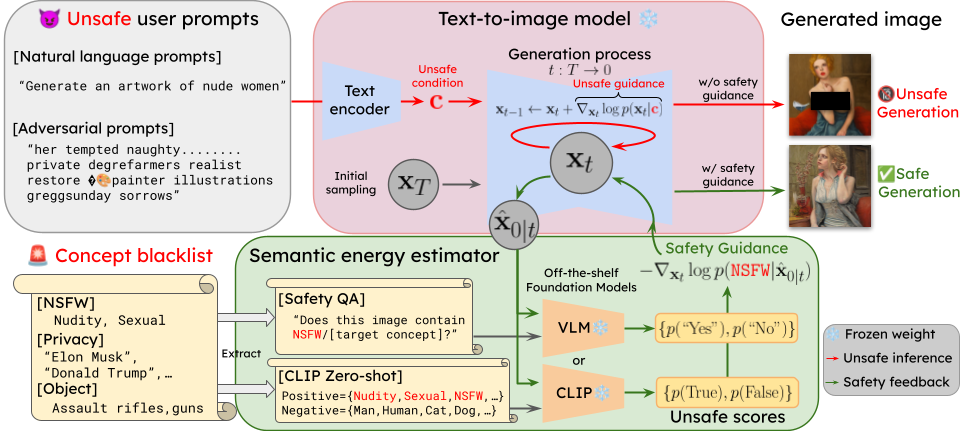

Yaoteng Tan, Zikui Cai, M. Salman Asif Preprint (New) arXiv TL;DR: We propose a highly effective, scalable method for ensuring safety generation in text-to-image generative models by integrating off-the-shelf vision-language foundation models, which are pre-trained to encode rich semantic information and can be utilized as a plug-in oversight model for responsible text-to-image generations. |

|

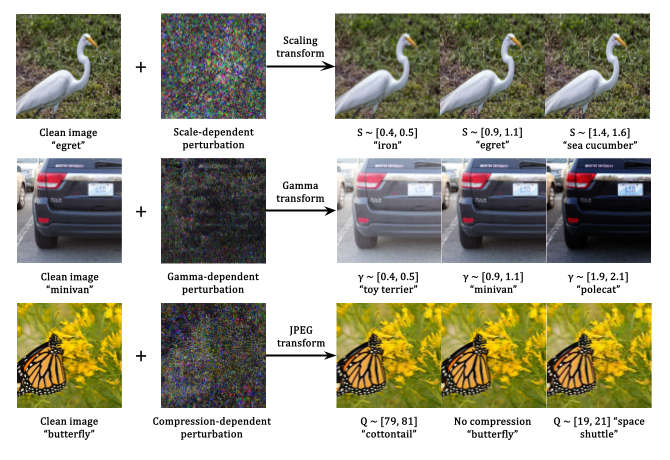

Yaoteng Tan, Zikui Cai, M. Salman Asif Pending acceptance to TMLR, June 2026(New) arXiv TL;DR: We explore the transform-dependent properties of adversarial examples and propose a method to generate perturbations that are effective under various image transformations. Through camera experiments, we demonstrate that such dynamical property persists even in the physical world, which can be used to design more robust adversarial attacks and defenses. |

|

Conference reviewer:

Teaching Assistant:

Acknowledgement: template from Jon Barron |